Governing AI: From Commitment to Consequence

Scoring Company Readiness with the Canbury 4C Framework

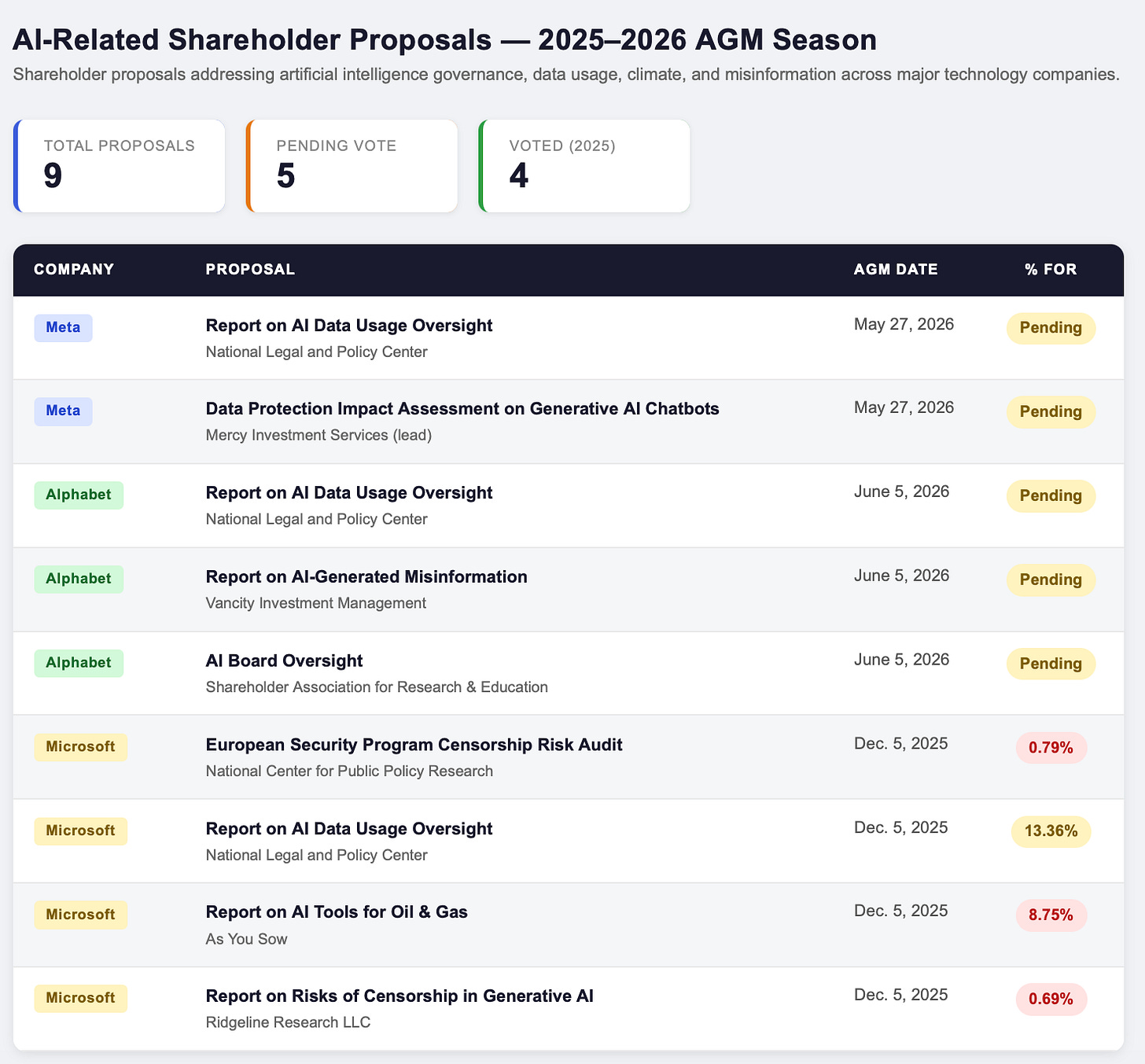

Alphabet and Meta face multiple shareholder proposals on their AI oversight, following significant regulatory fines and a lost social media addiction lawsuit.

The proposals seek better AI governance to address financial risks. With Canbury’s 4C framework, we consider a company’s Commitment and Capability to tell what it aspires to do and whether it has built the apparatus to act. Conduct and Consequence tell us whether its oversight is working.

The challenge for investors (and boards) is distinguishing the companies that have committed to governing AI well from those that are actually doing it. Given AI’s potential to disrupt operations and business models, shareholders want to know who is prepared.

The Governance Challenge

Across the emerging AI economy, companies face different governance risk profiles depending on their relationship to the technology. Three categories are useful:

· AI Architects - the companies building the models. The Architects are the direct subject of AI legislation: their models, outputs, and automated decisions are the primary target of the EU AI Act, GDPR enforcement, and antitrust scrutiny.

· AI Adjacent - those building the infrastructure that AI relies on. Semiconductor manufacturers, power generators, and data center operators are not the primary regulatory target today. But supply chain due diligence requirements are extending upstream, and these companies may face increasing pressure to demonstrate adequate governance.

· AI At-Risk - where generative AI directly challenges their products and services. AI At-Risk companies carry a dual governance obligation: managing the AI they deploy in their own products, and disclosing and addressing the strategic threat that generative AI poses to their existing product economics.

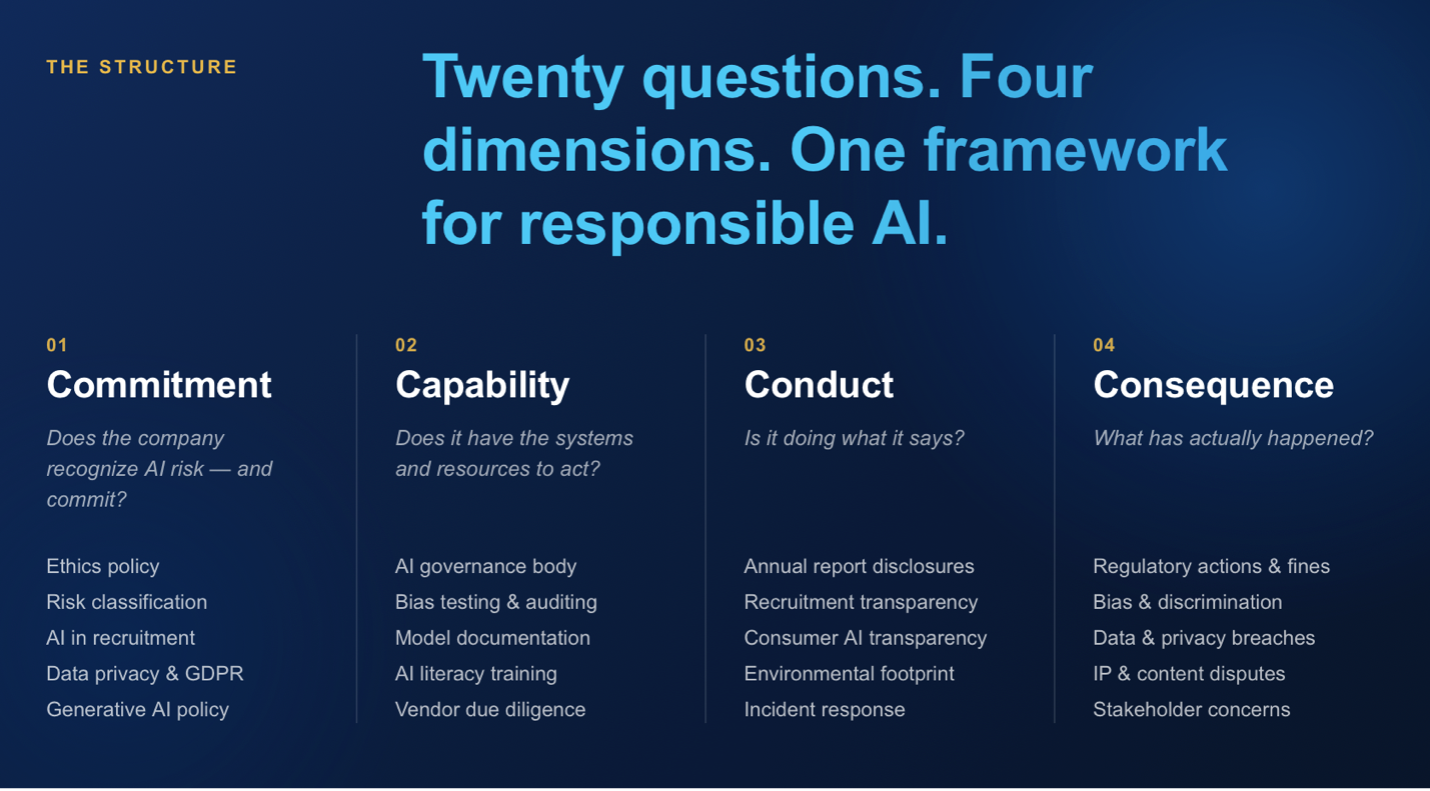

Canbury’s 4C Evaluation Framework

Canbury’s 4C Framework assesses companies across four dimensions of AI governance: Commitment — policies, public pledges, and stated positions; Capability — governance structures, systems, and resources to act on those commitments; Conduct — actual operational behavior; and Consequence — outcomes, incidents, regulatory enforcement, and litigation.

Scoring Company AI Governance

It’s still early days for AI oversight, but many have named a responsible board committee, published an AI ethics policy, and some explicitly reference the EU AI Act.

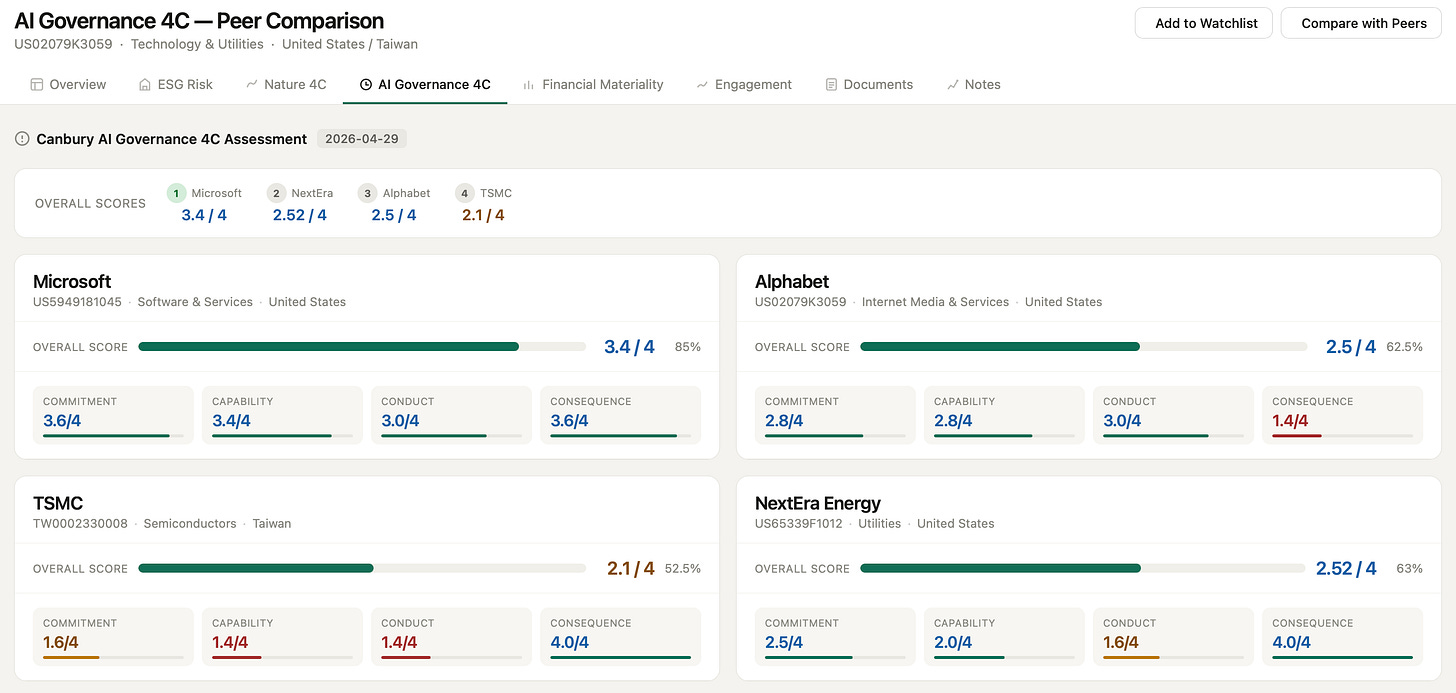

Below is a sample of the Canbury assessment for two AI Architects — Microsoft and Alphabet — and two AI Adjacent companies — TSMC and NextEra Energy.

Across these four companies, two patterns are worth tracking: the say-do gap (strong commitment, weak consequence); and the timing gap (clean consequence, thin framework), which characterizes both infrastructure companies, given neither has yet faced material AI-related consequences.

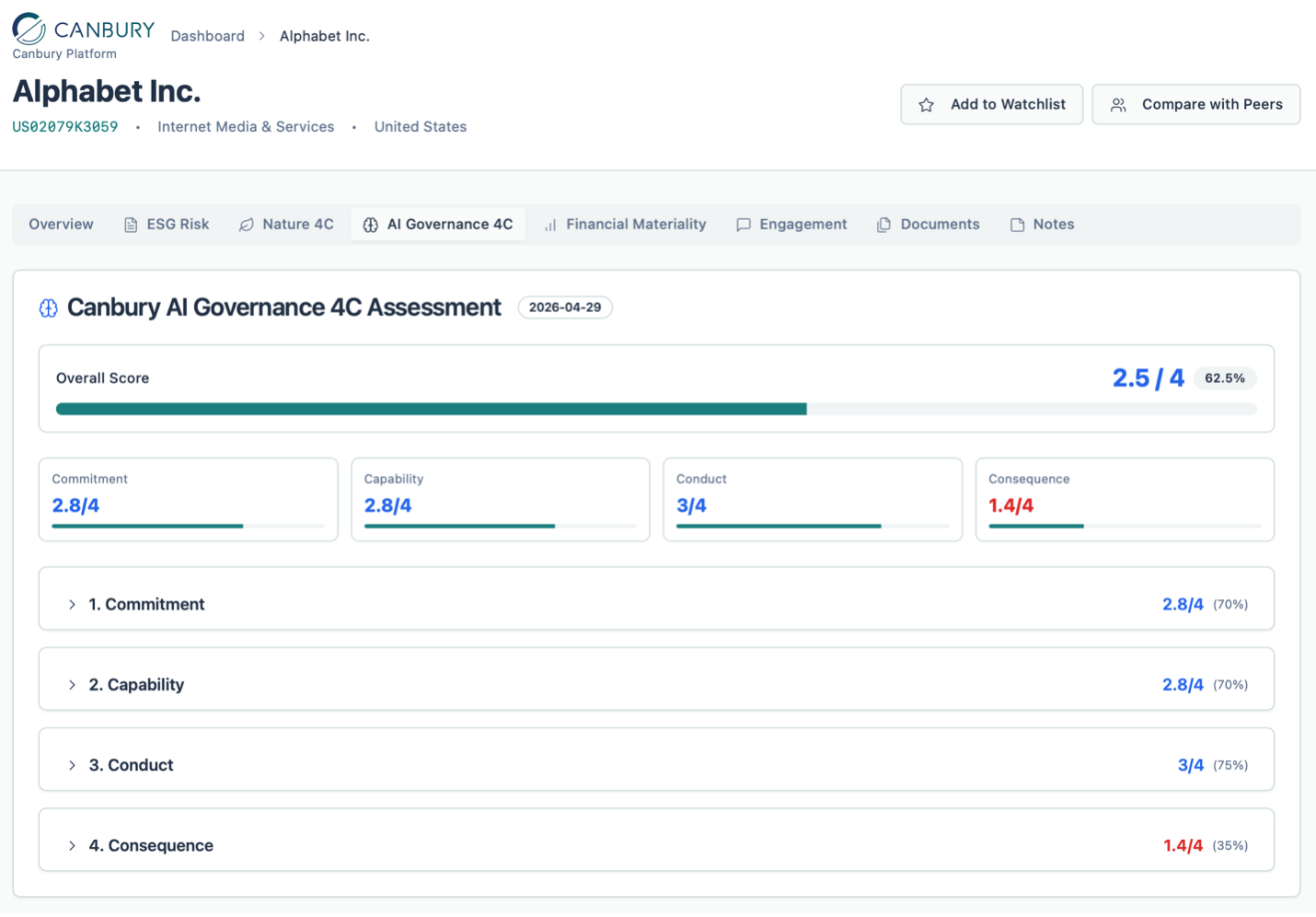

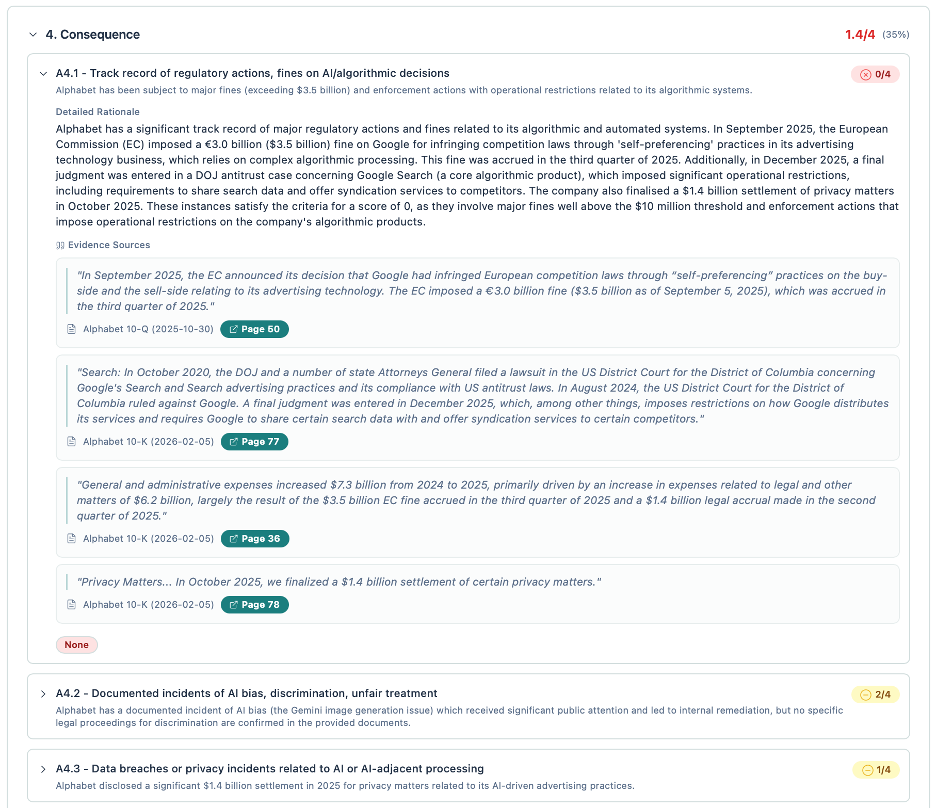

Alphabet — 2.5 / 4

Alphabet’s governance architecture is substantive: an AI Responsibility Council co-chaired at CLO level, an AGI Futures Council with board membership, six consecutive annual Responsible AI Progress Reports, and an auditable AI asset registry explicitly designed for EU AI Act compliance. The Consequence dimension scores a low 1.4/4. In 2025 the company accrued a €3.0 billion EU fine for algorithmic self-preferencing in ad tech, finalized a $1.4 billion privacy settlement, and received a final antitrust judgment on Google Search imposing data-sharing and syndication requirements. The gap between Commitment (2.8/4) and Consequence (1.4/4) is the central finding: the framework exists, but between policy and practice, the chain appears to break.

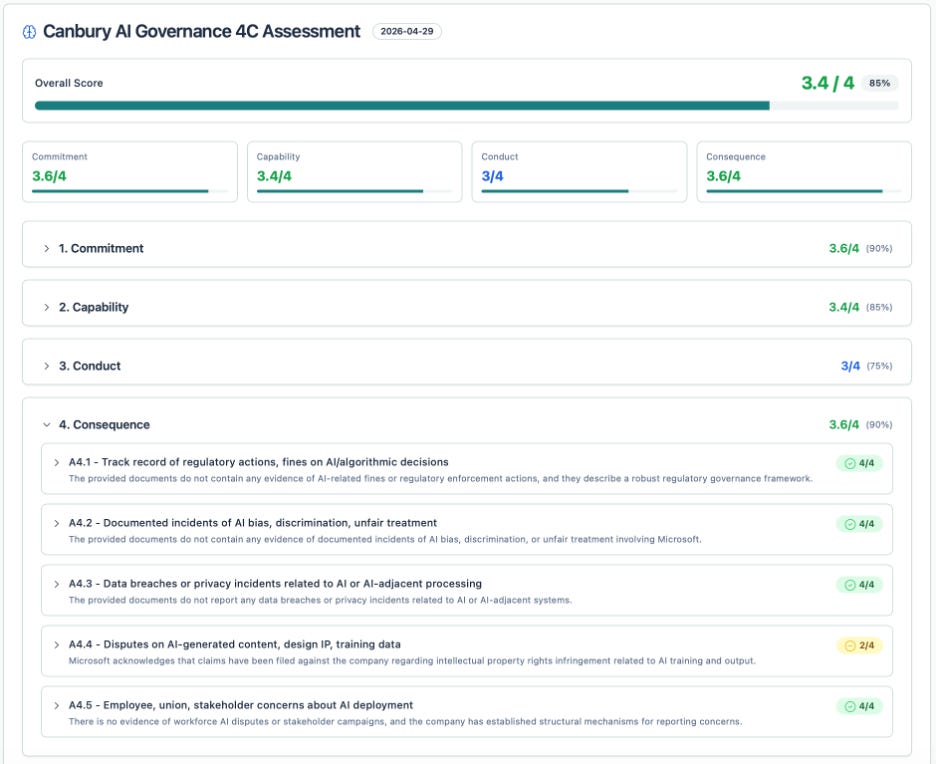

Microsoft — 3.4 / 4

Microsoft has the strongest end-to-end governance profile in this sample. The Office of Responsible AI has operated since 2019; the Responsible AI Standard is now in its second version; mandatory AI ethics training reached 99% of employees as of January 2025; and an active Sensitive Uses review process has demonstrably halted specific deployments, including disabling AI services to an Israeli Ministry of Defence unit after the company’s own investigation in September 2025. The Consequence record shows no AI-related regulatory fines, and IP claims arising from AI training data are acknowledged but not yet material. The main gaps are in conduct: bias testing results for specific hiring tools remain unpublished, and AI-specific emissions are bundled within broader operational data.

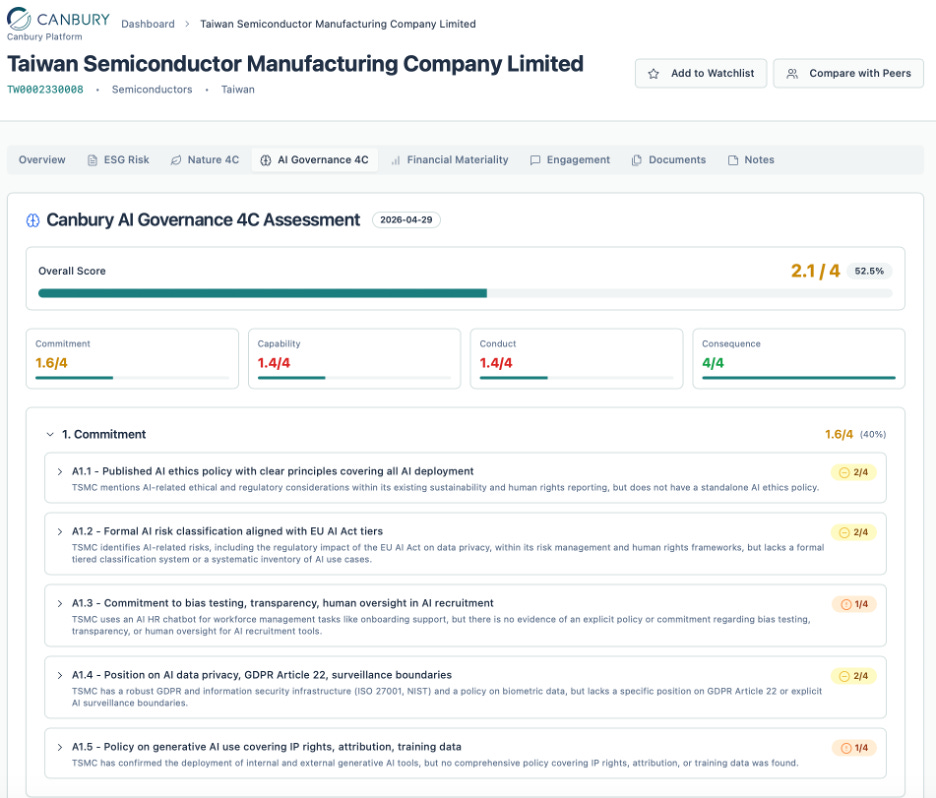

TSMC — 2.1 / 4

Chipmaker TSMC’s profile is the archetype of the AI Adjacent timing gap: a perfect Consequence score (4.0/4) — zero verified discrimination cases, no data breaches linked to AI systems, no regulatory enforcement — resting on Commitment (1.6/4) and Capability (1.4/4) scores that are the weakest in this set of companies. There is no dedicated AI governance body and no standalone AI ethics policy, despite extensive AI deployment in manufacturing process control, chip design via generative AI and LLMs, and an internal generative AI assistant (tGenie). The clean record probably owes as much to the pre-enforcement period for AI Adjacent companies as to any specific governance measures. As the EU AI Act’s supply chain requirements extend and customers intensify their own vendor governance scrutiny, a company fabricating the chips that power frontier models will face harder questions about its own posture.

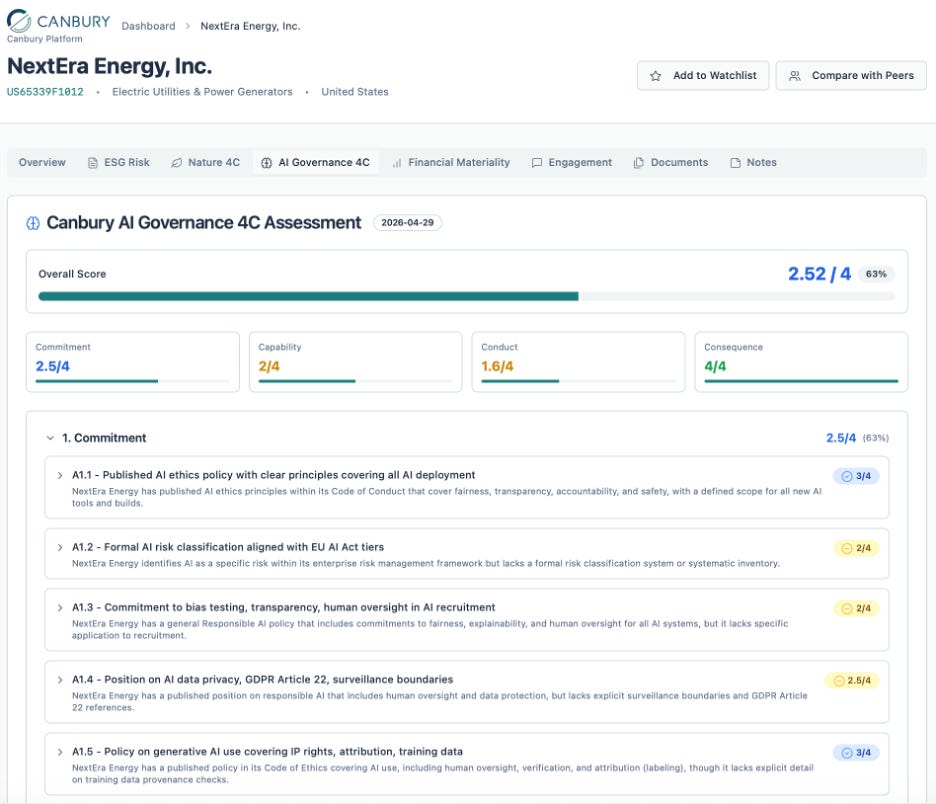

NextEra Energy — 2.52 / 4

NextEra Energy presents a comparable profile to TSMC, with a stronger governance foundation and the same timing gap. In February 2025, the company formally amended its Audit Committee Charter to assign AI risk oversight and added a ‘Responsible AI’ section to the Code of Business Conduct covering tool registration, output verification, and labelling. The lower conduct score (1.6/4) reflects implementation gaps: no bias testing results are published, NextEra’s incident response protocols are general cybersecurity procedures rather than AI-specific. While the governance direction is clear, evidence of it in practice has not yet appeared in public disclosures.

Commitment Now, Consequences Later

The EU AI Act’s penalty framework — up to 7% of global annual turnover for certain violations — is now operational. Given the billions at stake across not only regulatory and legal liability, but fundamental business growth, getting AI oversight right is critical for a large swath of companies. A governance framework is the starting point; ultimately, procedures and outcomes determine financial impact.

This post is provided for informational purposes only and does not constitute investment advice, financial guidance, or a recommendation regarding how to vote on any proxy proposal. While the analysis and figures presented are derived from public SEC filings and company reports and are believed to be accurate at the time of publication, they are provided without guarantee or warranty. Readers should conduct their own independent research and consult with a qualified professional before making any financial or voting decisions.

About Canbury

Canbury Insights is a technology-enabled sustainability consultancy applying AI tools to thoroughly and cost-efficiently deliver sustainability projects. We combine global expertise and local delivery to support organisations across the world to find the value in sustainability.

Copyright © 2026, Canbury Insights Limited. All rights reserved.